MCP开发入门

MCP以统一的方式让大语言模型能自动调用程序,极大扩充了大语言模型的应用范围,是一门非常火爆的技术。

本文简要介绍在主流技术栈中怎么开发MCP服务端和客户端。

原理

MCP(Model Context Protocol,模型上下文协议)是一个是LLM(大语言模型)能够与外部工具和数据源交互的协议。

简单理解:LLM通常只与文本打交道,可以使用系统提示词为其制定规则,然后向其输入直接对话的文本(用户提示词),从而让LLM在预置的规则下响应用户对话。由于LLM只与文本打交道,而用户很多工作是需要通过程序来实现的,LLM没法直接调用这些程序,所以就出现了各种旨在让LLM执行程序的技术,MCP可谓当前的佼佼者。这些技术的功能大同小异,让LLM根据用户的自然语言输入,自动填充方法参数,然后执行方法获得返回数据,LLM进一步分析返回数据,整理得到用户希望的最终答案。

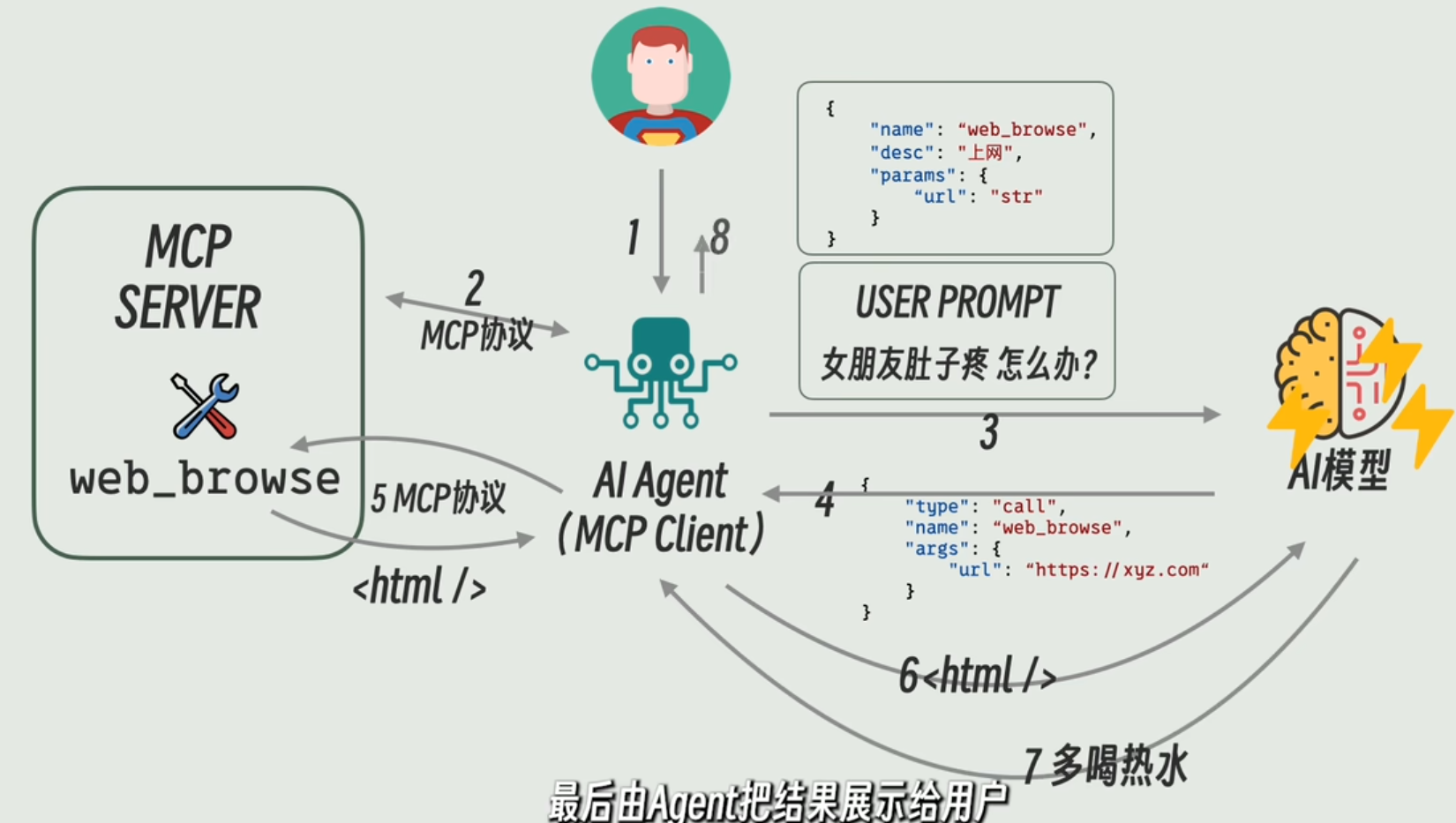

网络上有很多制作优良的图片可以很直观体现MCP的大致原理,下图是其一:

基本流程是:

- 用户以自然语言提问;

- MCP客户端从MCP服务端获取所有的工具元数据;

- MCP客户端将工具元数据作为提示词,同用户提示词一起发送给LLM;

- LLM分析出需要调用哪些MCP工具,并确定这些工具的传参;

- MCP工具被调用,获得返回信息,MCP客户端将工具返回值发送给LLM;

- LLM结合工具返回信息整理得到最终答案,返回给用户。

以下MCP客户端开发的流程也非常好地体现了这个流程。

服务端开发

Python

依赖以下包,有些是为了设置服务跨域引入的:

1 | dependencies = [ |

主程序:

1 | from pathlib import Path |

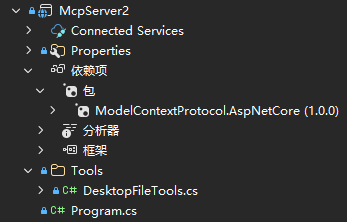

C#

使用ModelContextProtocol.AspNetCore包简化MCP服务端开发:

直接通过工具类名注册MCP工具:

1 | var builder = WebApplication.CreateBuilder(args); |

定义MCP工具,通过注解指定和说明工具方法:

1 | using ModelContextProtocol.Server; |

客户端开发

开发不同技术栈的客户端的原理和流程基本一致:使用MCP官方包定义客户端、使用OpenAI连接大模型。

Python

依赖以下包:

1 | dependencies = [ |

主程序:

1 | import asyncio |

Typescript

依赖以下npm包:

1 | "dependencies": { |

主程序:

1 | import { OpenAI } from "openai"; |

.NET

依赖以下NuGet包:

主程序:

1 | using ModelContextProtocol.Client; |

(转载本站文章请注明作者和出处lihaohello.top,请勿用于任何商业用途)